Material

Abstract

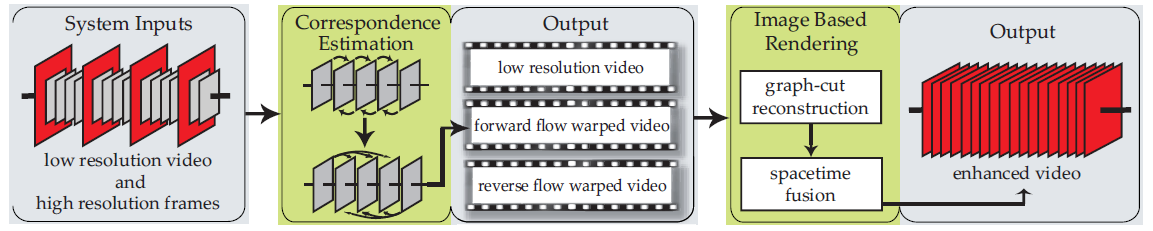

We present solutions for enhancing the spatial and/or temporal resolution of videos. Our algorithm targets the emerging consumer-level hybrid cameras that can simultaneously capture video and high-resolution stills. Our technique produces a high spacetime resolution video using the highresolution stills for rendering and the low-resolution video to guide the reconstruction and the rendering process. Our framework integrates and extends two existing algorithms, namely a high-quality optical flow algorithm and a highquality image-based-rendering algorithm. The framework enables a variety of applications that were previously unavailable to the amateur user, such as the ability to (1) automatically create videos with high spatiotemporal resolution, and (2) shift a high-resolution still to nearby points in time to better capture a missed event.